|

||||||||||||||

|

|

SummaryGenerative art made with multidimensional mathematics discovered personally at the end of 1980s. All art is created personally, from beginning to end: mathematics, software, images, mosaics, animations, text. Six unique microstories about famous people who are involved in the NFT and cryptocurrency space, personalized with details from their lives. Art minted in NFTs over future months and years. Private content that allows NFTs to contain images in 16K resolution, and animations in 8K resolution, only for the buyers, guaranteed to be original to future buyers, storable anywhere. A story about how it all happened. A story about why the multidimensional mathematics matters. A story about how Reality might work. Expansive work published online since the end of 2001. Custom software that renders mathematical equations in images and animations with a phenomenal technical quality. Art turned into puzzles that can be solved in the browser.

IntroductionArt LanguageArt MathematicsThe Art of RealityArt MotionWhat is Change?From Ugly Duckling to BreakthroughMultidimensional NumbersMultidimensional RotationRelativityWiring Of RealityThe PlanA Glimpse Into FutureNFT ContentResolution and QualityCustom Genart RequestsViewing the GenartViewing on ComputersViewing on TVsPrintingArchitectural DesignLicense TermsDefinitionsPublic ContentScarcityMarkedly DifferentHistoryBuyer's ChecklistTest Before BuyingNFT StructureMetadataStoring ContentPrivate ContentWhat Are NFTs?PuzzlesInvesting in MathematicsPublishing the EquationsYour Own GenartPrizesProgress FurtherOther People's WorkEpic Soundtracks

Introduction

At the end of 1980s, when I was a teenager, I've asked myself why the complex numbers aren't generalized to any number of dimensions, so I've tried to do it on my own. More than thirty years and four software applications later, this is the result. I am Void Sculptor and these are my NFTs with images and animations created with this multidimensional mathematics. Time, energy, mathematics, computer science, color science, philosophy, physics, psychology and language were necessary to reach this point. An obliterating effort made across the decades to breathe fire, passion and motion into the timeless and immutable Reality created by cold equations. More than a unique story, a mesmerizing world of delicacy and magnificence is emphasized in the "Wiring Of Reality" genart. Continue to read below the story behind this project, wonder about the nature of Reality, and see the beauty of equations that you never imagined existed. Follow the project to see mathematics reborn as art that you can only fall in love with. Beginnings, early 1990s: 117 Billion

Art LanguageMy story

It all started when I was 12 years old and I've created my own board game, a resource based game with a huge hand-drawn map of a fantasy continent. I remember seeing in my grandparents' house a huge map of Australia, drawn by hand, by my father's sister, perhaps for a school project, that catalyzed my interest for mesmerizing distant lands and map drawing. Later, I've become obsessed with logic. Obsessed not in the sense that I follow it, but in the sense that it follows me and doesn't go away until I do what it wants, while going through everything over and over, to absurdity, so that signal can be extracted from noise. For more than thirty-five years, this obsession led me deep into projects from various domains, including board game development, writings (artistic and technical), psychology (language and intelligence), mathematics (multidimensional numbers, around 15...17 years old, at the end of 1980s), and physics (trying to understand what Reality and Time are). If you follow the pattern to its source, everything is about language, about words that forge themselves in a precise path toward understanding. This pattern makes me feel like I have no boundaries when forging ideas with words, when carving streams of meaning into the void. On my journey of enjoying the written word in the form of adventure, SciFi and mystery novels, my artistic inclination has pushed me toward creating a card game set in a SciFi world. Over many years, I've immersed myself in a world of graphical art from various artists, and I've gathered fragments of text that eventually ended up making the lore for the game. But this game was only meant to be a precursor for a novel set in that SciFi world. The path to its completion is filled with notes, experiments, and outbursts of creativity that may or may not be a part of the final novel, but they've inspired my imagination to wander in this Universe and others. It remains unknown whether the journey of the novel will reach its destination, but the notes may one day be glimpses into its past. Some of the notes might not make it into the novel even though they are just as good as those that will make it, but since they are an outburst of artistic creativity, they may not fit in the novel's storyline. But they will live as words.

Art MathematicsFor people who think Reality is not what it appears

The Art of RealityThis is the story of the Art of Reality. To bring this form of art to life, I had to discover a new mathematics related to multidimensional numbers, and I had to build custom software to turn the mathematics into color. Time. That was necessary. And effort that has spanned across more than thirty years. Decades of Change, of the very substance that makes Reality. Change is real, at least to people. But mathematics exists outside of Change, and even though people think of it as not being real, it describes Change, it describes the real thing. Is this description the creation source of all Reality? How can a subset of mathematics describe the Universe, while the rest of mathematics can't? How odd is to have a separation between mathematics that can describe Reality, and mathematics that can't. What makes an equation that is rendered by a computer less real than the equation that describes this Universe? It's not possible to prove a system from within itself. You have to see it from outside of what you're trying to prove, so you have to be outside of the simulation in order to be able to tell whether it's a simulation. This creates an endless loop of something external that makes something internal, loop that blurs the difference between the informational Reality created by mathematics, and the natural Reality created by some unknown magical ingredient, until you can no longer tell the difference. But if you can't tell the difference between a natural Universe and one simulated with the same equation, how can you claim that there is a difference? What is the magical ingredient that makes the difference? Could there be such a magical ingredient? There could be, but a magical ingredient that could exist, yet has no logical or practical constraints, and can't be observed, analyzed or dismantled into components (with minimal informational content to describe them), is similar to a god. If you can't observe such a magical ingredient, if you can't tell or logically claim that there is a difference, doesn't that mean that, for all practical reasons, there is no difference to begin with? This would mean that Reality has no characteristics outside of mathematics, which would mean that Reality is informational, abstract, which would mean that any piece of mathematics can create some sort of Reality. If mathematics can explain Reality, why would anything else be required to be the explanation? Sure, there might be some other explanation, but why would that be required? People like to ask in jest whether this means that a circle is real, jest born out of ignoring the difference of complexity of characteristics between a circle and this Universe. A circle does not have the properties of this Universe, and therefore can't produce anything of similar complexity. By all intents and purposes, a circle has no properties other than those already known, it experiences no change, so it's realism is just as irrelevant as it always was. A good question would be to ask how many instances of a circle are out there, and what instantiates them. The equations that are known as laws of physics and describe (not create) this Universe, are like equations that describe various structures inside a fractal, and the relationships among them. None of those equations made those structures, never mind the entire fractal. They are mere descriptions of various characteristics of the actual (unknown) equation that created the fractal (or Universe). Let's take the example of the mathematical equation that describes a pendulum's motion. Does it create a real pendulum? No, because that equation is a simple description of the motion of the pendulum. The pendulum itself, as an object, is a pattern that people can identify within the equation of the entire Universe, equation whose evolution started at the Big Bang. So, the pendulum is a pattern created by the equation of the entire Universe after billions of years of evolution, not instantly created by the equation that describes its motion. But a real pendulum can be moved outside of the Universe, right? People are inclined to think that this is possible because there is a general belief that the Universe and the objects within it are made of particles that can be moved around, like balls in a game of pool. In reality, the pendulum is made of parts, of molecules, of atoms, of quarks, of forces and fields, and all of those only exist because the progression of the Universe has evolved to the point where a pendulum could arise from it and be identified as such. The pendulum can't exist outside of the Universe since nothing would maintain the laws of physics that drive its every part. In their minds, people can remove a pendulum from the Universe because they're simplifying the world, and their train of thoughts is not constrained by the entire chain of causality of the pendulum. But the physical pendulum is constrained and, therefore, can't exist outside of those constraints, outside of the Universe. Perhaps a copy of the pendulum could be made and placed outside the Universe. However, in this case the medium that makes the pendulum was changed, so there are new laws of physics that drive such a copy, which means that people have to ask themselves where these laws came from. What would breathe life into the new pendulum? Nothing, as its own progression is no more to drive it. So, there is no collection of ball-like particles that can be moved outside of the Universe, outside of the equation that made it. But if particles aren't independent balls, why would anyone think that there are particles that can be moved through the Universe? That makes no sense. What makes more sense is that particles are actually wobbles of the fields, with no independent life of their own, wobbles that can only follow the equation that made the Universe. This means that this Universe is one thing, one progression, and there is nothing outside of it, at least not in the sense of spatially separated things. A computer simulation that is identical to the natural Universe, made by the natural people, is different than the natural Universe because the simulated matter doesn't interact with the natural matter in the same way that natural matter interacts with natural matter. But the simulated matter manifests for the simulated people exactly as natural matter manifests for the natural people, to the extent that the simulated people can't tell that they live in a simulation. Natural people can tell the difference since they've made the simulation. But how can natural people tell whether they themselves are simulated or not? It's possible that the natural people are living in a simulation made by people from another "more natural" Universe, and so on in an endless loop of "more natural" Universes. Right at this moment, as you read these words, a being from the Universe that simulates this Universe may be pointing at you and saying that you truly believe that you are natural / real, though that being knows that you are simulated. Without a magical ingredient that makes the difference between natural and simulated, ingredient that people haven't found for millennia, there is no difference, and therefore Reality can only be abstract / informational / mathematical. As if this wasn't strange enough, this Universe could be a simulation that exists only for the time it takes a being from the simulating Universe to blink. Yet, at human scale it feels like billions of years pass. This entire argument isn't saying that this Universe is a computer simulation started in another Universe, but it's saying that there is no magical ingredient that makes the difference between natural and simulated, other than the level of imbrication in the potential tree of simulations, level that you can only see from outside the simulation. For example, if people were to make a computer simulation that's very close in detail to this Universe, that simulation would be on level +1 (of imbrication). But this Universe itself could be on its own +1 level in another Universe. And there is no magical ingredient that could say that this Universe is the root of all levels of simulations, the one true natural / real Universe. The levels of simulation are similar to the infinite nature of a fractal, in which it's possible to zoom in endlessly and see similar shapes. Is there a root of all levels? There may be, but there is no magical ingredient that makes the difference between a natural and a simulated level. The creation source of a natural Universe appears to be the same as the creation source of a simulated Universe: mathematics, specifically, a mathematical progression. A progression is the periodic application of a set of rules on the current state of the progression, starting from an initial state, creating minute differences between consecutive states. Usually, the rules and the initial state can be described with very little information, compared to the information that is required to describe other states of the progression. Why don't people like to think that a real Universe is created by abstract mathematics alone? Because they think that mathematics isn't affected by anything from either inside or outside. If you create a Universe as a mathematical progression, can you put a finger in it and affect change in that Universe, or will it continue to follow the progression unaffected? In other words, is that Universe reactive to external change? For people who believe in something real beyond abstractions, it's obvious that a recording of a Universe is not real because if it's played multiple times it can't change on its own, since there is no free will in a recording, and it can't react to someone from the outside putting a finger in it. The problem of thinking that a Universe can be real only if it reacts to external change, is that there must always be an external finger that can affect each Universe, in order to make that Universe real. Where does that finger come from? From another Universe, Universe which, in order to be real, requires a finger external to it, and so on. Therefore this requirement can't be true. The reason why consciousness, intelligence and behavior appear to have free will and are adaptable to the environment, while being the product of the evolution of a mechanistic Reality, is the feedback loop that the progression of this Universe contains, loop where the output of the mind becomes its input and further modifies its output. If full free will is understood as an ability to make decisions without following the laws of physics, and by breaking the chain of causality (which started at the beginning of the Universe), what are those decisions based on? What triggers them? What is their chain of causality? If there is nothing to base the decisions on, if there is no chain of causality, it means that they are made randomly (for example due to quantum fluctuations). But if they are made randomly, it means that there is no self / soul that makes the decisions without following the laws of physics. Full free will is an illusion born from a brain's observations of the environment. For example, a brain can detect that, in the past, either its left or right hand had moved, so it knows that in the future the same could happen again. "Detected" means that the brain has traces / memories of the past. The movement of the hand appears to be chosen by the brain because its future movement is unknown, complex, chaotic, like the movement of a ball in a game of pool. But just like the ball can't choose its path, the brain can't choose the movement of the hand. It's the progression of the Universe that causes the movement. In this progression, movement in a three-dimensional space (= translation) is symmetric (= reverting movement doesn't affect anything else). On the opposite side is gravity. Gravity is a law of physics which has a single direction of effect, that is, it's asymmetric. So, anyone who thinks they have full free fill should jump in the air and float by choice. Breaking the unidirectional effect of gravity is proof that something called "you" can interfere with the purely mechanical progression of the Universe, and can choose to make a choice outside of the chain of causality of the Universe. How come mathematics exists, timeless and immutable, and without being caused by something? Now, that is the right question!

Was mathematics invented by people? If anyone can invent a circle whose Pi has a value of 4 (aside from the one with a value of 3.14) then mathematics was invented, otherwise it was discovered following the constraints of this Universe, which means that outside of this Universe, unknown mathematics may exist, based on rules that people can't even imagine. Mathematics can be reduced to a set of rules. For example, the integer numbers are states in a progression rather than a list where all the elements are already laid out. This means that the integer numbers exist only when they are used / instantiated from their progression, by another progression. So, the ultimate question is: how come do these rules exist, universally? From this point on, there are no more constraints of Reality that can guide the thought process, so only speculation can provide possibilities.

Art MotionFor people who find philosophy and physics interesting

What is Change?What you will see in the animations below will surprise you, at least if you consider that the mathematical operations involved are rotation and translation, albeit iterated. Mathematically speaking, there is a domain, an abstract coordinate space where there is nothing other than distances, in which an equation is applied to produce a codomain. The codomain appears to be more than an abstract coordinate space, especially when the equation is a progression. The codomain appears to manifest, to have presence, to behave like a substance, because the distribution of the codomain coordinates / values changes. A critical goal I had (and achieved) was to have every pixel computed independently of other pixels, even in animations. This means that each pixel can be computed on its own, without computing any other pixel, as if each pixel exists alone. Yet, an entire animation looks as if every pixel influences its neighbors, in Space and Time, like the molecules of a fluid in motion. This is in contrast to many visual effects (from video editing) that require many adjacent pixels to be processed in order to apply the effect on a single pixel. The animations below look as if there is a fluid made of particles that influence one another through a chain of causality that is propagating from particle to particle. But there are no particles, no forces, no influence, no chain of causality, and no time (only order). Every frame of the animation is merely the next static image that is part of a progression. Every pixel has no idea of any other pixel, that is, pixels don't influence one another. Yet, Change can be seen flowing across distances. It looks as if things influence one another... in Time. You can see shapes that change and flow. You can uniquely track the shapes while they change, you can see the contiguity of their identities as they form, progress and dissolve, contiguity which is perceived as a chain of causality. But there is no magical ingredient called "change" in there. In the language of quantum mechanics, no information travels from pixel to pixel to make them locally connected or remotely entangled. There are no local hidden variables. It's all determined by the progression. In the world of this progression, an observer is not the cause of what happens, but the effect of what happens. The observer doesn't cause the collapse of the quantum wave function by observing it, the collapse causes the observer to observe it. This could indicate that people's observation and belief that this Universe is made by particles influencing particles, creating persistent complex structures out of linear and circular trajectories zipping around the Universe, could be fundamentally flawed. This raises one of the most important questions in the history of the Universe: what is Change? What does it mean that things change? What is the source of Change? The common knowledge about Change is that some fundamental forces are pushing particles around, independently of one another, making different structures. But to push things you need forces and energy. So, what are these? The problem is that this train of thoughts doesn't break away from the classical path: there is something magical that pushes things into new structures, out of the void or randomness. It seems as if there is something that meddles with individual particles in order to make the Universe work in some purposeful way. It appears as if Change is like wiggling a finger in a lake. Or so have philosophers and theologians thought for millennia, asking themselves subtle questions, but with little knowledge about the world unseen. The molecules of water from the lake don't wiggle by themselves, they must follow a chain of causality throughout the lake. But the finger is outside of the lake, which means that Change comes from outside of the lake. Yet, in this Universe, nothing has been observed to meddle with individual particles and break the chain of causality that started at the beginning of the Universe, so all Change must come from inside the Universe, even if initially it was, perhaps, outside. This is like a game of pool where the balls precisely follow trajectories, after being hit with a cue stick. At no point in the progression can the balls choose to not follow the chain of causality, but it is possible to interfere with them from the outside, with a cue stick. Quantum fluctuations may be small, (pseudo)random changes that work as if they are external to the deterministic progression of this Universe, so they may work like wiggling a finger in a lake, so they may make the future not purely deterministic. But if you think that quantum fluctuations are naturally random, you have to ask yourself what is natural randomness if not deterministic, mathematical chaos, with an unknown evolution equation and state? Outside of this explanation, what does it even mean to be naturally random? Where is that (random) information coming / instantiated from? What is the magic ingredient that makes the difference between natural and deterministic randomness? But if Change is the independent transformation of every particle in the Universe, starting from the Big Bang, how do particles, that move around linearly, and sometimes in circles, know how to form the same kind of complex structures even when separated by great distances, unlike the balls in a game of pool? The balls from a game of pool don't form the same persistent structures across space and time. Even if they were to randomly form complex structures here and there, they would not be maintained for long, in contrast to how they are in the Universe. It looks as if the way people think the Universe works is not the right path to follow. It looks as if particles must have some common trait, a preexisting informational pattern they follow, pattern that describes their common transformations across any distance. It looks as if everything is a complex and contiguous progression, where the output of an iteration is the input for the next iteration, forming an apparent chain of causality. It looks as if the Universe is produced by a mathematical equation that has nothing to do with pushing things around within some "real" scene, but with a special kind of transformation in the abstract mathematical space. It looks as if Change is the evolution of a mathematical progression. The progression is somehow instantiated and enumerated, and people perceive this as Change, as Time. This process is what turns abstract mathematics into (what people call) real things. It looks as if "movement" is merely the name of a pattern that describes a narrow part of the progression's evolution, pattern that people extricate from the progression in order to simplify its complexity, and they can do so because of the (translational) symmetry of this pattern. This simplification is why the laws of physics appear to work symmetrically when Time moves (theoretically) either forward or backward, while the progression of the Universe doesn't. The laws of physics merely describe human-comprehensible symmetric patterns within the bigger asymmetric progression. Looking back at the example with the game of pool, and this can be treated from a purely mathematical perspective, once the balls are hit with a cue stick, they follow a progression. The movement of the balls can be described with linear trajectories and deflections, yet, the balls can't move back (in Time) because their progression doesn't contain such evolution. The progression's evolution makes Time itself, and there is no dimension of Time where the balls could move back, counter to their progression. Considering that the current scientific knowledge is correct, but changing the language to more precisely describe the known Reality, Change is a mathematical progression that possibly started with the Big Bang, and Time is the iterative nature of this progression. Speaking simplistically, Change could be a three-dimensional progression (where things can "move" back and forth) that moves in a fourth dimension (and does so in only one direction), and gravity and acceleration could be a type of drag effect (of the progression while it's moving) through the fourth dimension. So, where gravity and acceleration are high in the three-dimensional progression, things are "left behind" in the fourth dimension. What creates complex structures across distances, out of apparent linear and circular movement, is the nature of the progression. The complexity of this Universe didn't appear out of randomness, it's the result of a precise progression. What can be said about this progression? Let's say that infinity exists, and an infinity of rules exists, and an infinity of combinations of the rules exists. Let's also say that the rules that make this Universe are a subset of infinity; people can't observe outside of the Universe, so they can't see if there are unknown rules. Now consider the fact that nobody ever saw different rules for the Universe. It appears that the subset of rules that make this Universe was somehow selected from infinity, and fixed, right from the beginning (= Big Bang), so somehow rules can form (potentially) immutable sets. The reason why nobody has ever seen half of the Universe just vanish into the void is that this Universe is a contiguous progression that contains no such dramatic changes. This contiguity was never observed to be broken, like objects and people appearing from and disappearing into thin air, or people suddenly getting a third eye or hand. This further enforces the idea that this Universe can only progress one way, the way of the progression, perhaps with some influence from (pseudo)random quantum fluctuations. This works against the idea that everything that can happen does happen in a parallel Universe, where there are supposedly many versions of you in different states. If that were the case, there would be a statistically relevant number of cases in which people would suddenly get an extra finger, literally out of thin air. But this doesn't happen because Reality, or at least this Universe, is neither random nor probabilistic, but can only progress one way. It's possible to say that non-contiguous events aren't visible because the probability is too low. However, you have to consider that the Universe contains a lot of things, and humans have observed (scientifically) for a very long time, yet it's perfectly contiguous. Add to this the fact that the laws of physics don't seem to change. Going down to the quantum level, the spin and the mass of an electron don't vary probabilistically in the same experiments that show quantum probabilities. Why are these things constant? Because there are rules which predate quantum mechanics, and they were set a long time ago. Whatever quantum mechanics does, its macroscopic result isn't probabilistic, but is collapsing on the deterministic patterns of the laws of physics that predate it and set the rules for what can happen, meaning that the number of fingers of a hand isn't probabilistic. This means that quantum mechanics is not fundamental, or, at best, is only a part of the fundamental rules. This is in complete contrast to the idea that (all) things could be different in a parallel Universe, because, if they were, that would raise the question of why they're never different in this Universe, and why the rule of fingers of a hand never changes, no matter how many photons and people go either left or right. You could make a vague case that accidents (that would result in fewer fingers) are the probabilistic result of quantum mechanics over a long period of time, instead of the result of a chain of causality, but then you have to ask why it's so rare and why the number of fingers never increases (or at least why is the probability so skewed in favor of staying the same or of decreasing). As for Schrodinger's cat, it will never be alive when the box is open, that is, it's state is not probabilistic in this Universe. This means that the (pseudo)random nature of quantum fluctuations has a minuscule effect over the entire progression, but without them, the Universe would likely progress with little detail / variation. But if you extricate the (pseudo)randomness of quantum fluctuations from the Universe, what remains is the deterministic progression of the Universe where the rule of fingers of a hand never changes. Change might be reactive to external influence. For example, if in a game of pool a player (= the external influence) places an object in the path of a moving ball, the ball will react to that obstacle rather than continue to follow its original path. This Universe may be behaving the same way under the influence of (pseudo)random quantum fluctuations. The progression contains traces of its past, included in the present, and this makes it possible to determine, to some extent, the progression's past. Change appears to be simultaneous everywhere, that is, there is no driver that moves throughout the Universe causing Change to happen from point to point. If two objects start from a common location and move in opposite directions, they affect the environment simultaneously, without one waiting for the other to finish affecting the environment. When things on Earth change, things in the Andromeda galaxy also change, they don't wait for the things on Earth to finish changing. You can read about relativistic effects later, and about pseudo-simultaneity.

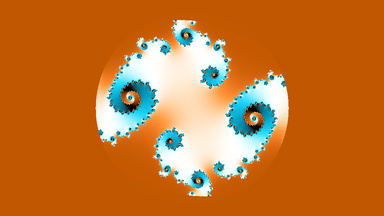

From Ugly Duckling to BreakthroughI've been disappointed by how multidimensional numbers work ever since I saw that, in at least three dimensions, multiplication isn't distributive over addition, in contrast to how it is for one and two dimensions. This means that the commonly known symmetry of two-dimensional rotation is lost, so the equation renderings are distorted when using the third dimension. Indeed, once I've rendered various equations, the result was ugly. In the best cases, it looked like clothes being wrung to squeeze the water out of them. In the worst cases, it looked like noise. For years, the theory of multidimensional numbers remained like a stain in my past, hovering like a reminder of failure, despite it being the way that mathematics actually works. I wasn't able to discover a better way. More than thirty years after the original discovery, in February 2021, the NFT revolution pushed me over the edge of passivity and I told myself that I could create NFTs with my card game lore. The work started with the desire to use the text itself, not images, so as to spare myself of mountains of work, but has evolved toward building for months a software application that could render the lore in thousands of calligraphic images, until I realized that I could also create NFTs with my old renderings of mathematical equations. But that felt naive and obsolete, considering the esthetic quality that fractals have today. So, I started wondering what I could bring to collectors that would be different from anything in existence. More months of work followed, massively updating an old software application that can now render mathematical equations into colorful images of exceptional technical quality. In total, the work related to NFTs, until minting, has taken well over 1'500 hours (spread over 21 months) of building on top of existing work that was developed over the decades. In an effort to find my own path, I wanted to move away from the excessive symmetry of fractals, away from endless zooms that felt like navigating through a static world, and away from animations where shapes were moving around, cycling in space, without influencing the surroundings. I wanted asymmetry and light, and most importantly, during animations, I wanted more than a mere change of colors and shapes. I wanted animations that felt like a fluid, like a changing world, like everything was influencing everything, like matter pushing, pulling and bending matter. I wanted a mathematical lava that gives birth to strange, new worlds. For this, I've reluctantly experimented with animations; reluctantly because I was expecting to see the usual fractal animations, or noise. My work on Change and Time had been done almost a year before, so I already had a taste of what Change should look like. This lead me to use a two-dimensional shape known as a Julia fractal, but replace its internal two-dimensional rotation with a three-dimensional one, that is, with a rotation of the set of multidimensional points that make the fractal, around the point of origin of the entire set. The result was the one that previously looked distorted. Then, I added an animation that moved the fractal in the third dimension. After decades of waiting in the dusty corners of my mind, the multidimensional rotation combined with moving in the third dimension left me in an emotional state that can only be described as jaw dropping, as it turned out that asymmetry can create stunning beauty when it's part of a progression. From ugly duckling to breakthrough. From desert to paradise. From static to dynamic. Metamorphosis emerged in real time. The mathematical fluid of Reality arose before my very eyes. After an astounding journey of irrational following of my progression across thirty-five years, peppered with ecstasy and abandonment, but mostly with obsession, I give you the pioneering work that may one day change the way we think about Reality, the first animation ever to be made with a multidimensional rotation, "Breakthrough". Breakthrough, 2021

|

|||||||||||||

XY (around its center) → XY |

XY (around its vertical middle) → XZ |

|

XY (around its horizontal middle) → YZ |

|

But what would happen if instead of rotating the Julia fractal, we move it in the third dimension? A bit of mathematics is required. The equation that describes this fractal has the form "m * m + c", where "m" is the domain, and "c" a constant. We want to see the (a)symmetric behavior of the two-dimensional rotation that is normally used internally by this fractal, when the fractal is moved in the third dimension. The correct way of doing this is by using the multidimensional rotation, but we want to deliberately avoid this, so we use conceptual approximations that allow us to think about scenarios in which we don't know the correct three-dimensional mathematics.

For this purpose, the multiplication must act as a rotation only in the first two dimensions (in the XY plane), so the second "m" from the equation, henceforth identified as "w", must have its second angle equal with 0, so it's third dimension cartesian coordinate must be 0. We do this so that, while the first "m" has a third dimension, the result remains in the same XY plane, that is, the Z coordinate of the result doesn't change. The other important thing is the radius of "w", and there are several scenarios:

Scenario 1: The radius of "w" is equal with the radius of "m" only in the first two dimensions. This should be the most accurate scenario because the cartesian coordinates from the first two dimensions of "w" remain unchanged, while the one from the third dimension is 0. From the animation, you'll see that the symmetry of the fractal is maintained, and this indicates that the assumption is correct. Mathematically, the assumption is correct because multiplication is distributive over addition when the degree / dimension of the common MDN is maximum 2, so "m * (p + q) = m * p + m * q" when the degree / dimension of "m" is maximum 2, which is what this scenario is equivalent to.

Scenario 2: The radius of "w" is equal with the radius of "m". Here, the cartesian coordinates from the first two dimensions of "w" must change to match the radius of "m".

Scenario 3: The radius of "w" is equal with 1, so the radius of the codomain is equal with the radius of "m". Here, "w" only rotates "m", so there is no squaring of the radius, which makes this scenario unrelated, but it looks interesting.

Scenario 4, MDN: Here, "w" is equal with "m", so the rotation is multidimensional. This is for reference.

Click images for animations

Scenario 1 (symmetry maintained) |

Scenario 2 |

|

Scenario 3 |

Scenario 4 (MDN) |

As a side note, translation, which is known as linear movement, is symmetric in any number of dimensions, so the structure of objects is always maintained under translation, at least in an Euclidean space.

Relativity

For people who find physics interesting

Relativity affects the rate with which Time appears to flow because of a change in a geometric property of Reality, called time-cadence, property over which Change happens. Time can be thought of as a linear composition of the time-cadence of each iteration of the progression, individually for each quanta. This means that Change itself, separated from the time-cadence and velocity in space, is consistent, that is, it happens in exactly the same way.

To understand this composition of Time, think at watching a movie. The movie is the analog of the Universe changing, the frame rate is the analog of the time-cadence, the differences in the informational content of the frames are the analog of Change, and you are the analog of an external observer. You can change the frame rate of the movie to anything you want, and you can vary it throughout the movie. If you change the frame rate, you will see change happening slower or faster, but the differences in the content of consecutive frames remain the same no matter what frame rate you set.

When I was trying to understand the symmetry of rotation in the surrounding world, I was working on several personal projects and working for a job, all while trying to understand how it's possible for the Theory of Relativity to be correct.

The existing explanations were saying that, in void, from the point of view of each object, each is at rest and the others are moving, so the clocks of the others appear slower. But nobody was explaining how you can reconcile that with actual Reality, that is, what happens if you compare the clocks, since in that case you can't say that all are correct in claiming that the others are slower.

But how do you compare the clocks when they are at a great distance, moving with relativistic velocities, and are affected by the lack of the simultaneity of measurement? The Twin Paradox is a special case because the spaceship leaves from and returns to Earth, so the comparison is simple since the clocks are together, in the beginning and end, not moving relative to each other.

I knew that the mathematics was experimentally verified, so it had to be correct. That meant that I couldn't trust anything I was hearing. Combine that with the fact that the advanced mathematics was too advanced for me to learn in my spare time, and it felt like every neuron I had was gasping to reach for light. It was mind melting.

I was hungry. Literally. For calories, not knowledge. There were two or three days when I ate twice the amount of calories that I was used to, and I was still hungry. It was nuts. Literally. I was eating nuts for the extra calories.

I started to think about scenarios with two and three objects in void, where conflicting results had to be reconciled. A picture was becoming clearer and clearer in my mind, until I eventually understood that all the scenarios were correct but the contexts were different, so they could not be compared. But nobody was explaining why that was the case.

I've reconciled the conflicting scenarios by creating pseudo-simultaneity for large distances, with lasers, since the photon velocity is the only certain thing: the speed of light. Pseudo-simultaneity means that actual simultaneity can only be known much later, but can be known. Then, I realized that measuring time in the Universe is like trying to weigh different objects with different scales that use different measurement units (like kilograms and pounds), but you don't know what those units are, which makes the numbers shown by the scales irrelevant. Just the same, the relative velocity measured in void is irrelevant because each object could be moving relative to the others with any velocity, so it's not necessarily true that one object is at rest and the others do all the moving.

But why is this irrelevant relative velocity working for the Twin Paradox? How can you possibly make sense of this?

Simple: you bring the scales together and synchronize them by weighing the same object. After that, if you known how each object moves, you know how the measurement units change, so you known how the weight and time of each object change. And that's why the Twin Paradox works fine: the spaceship and Earth are together at the beginning of the trip, their clocks are synchronized, and the movements of the spaceship relative to Earth are known until the ship returns and the clocks can be compared directly.

But how does the Universe know how to do it without knowing how each object moves, without keeping track of each and every single quanta that zips around the Universe? The answer: there are no objects, there are no quanta. The Universe is all one single progression where every single point has no idea of any other single point, even though they all appear to work together and influence one another. That appearance is an illusion.

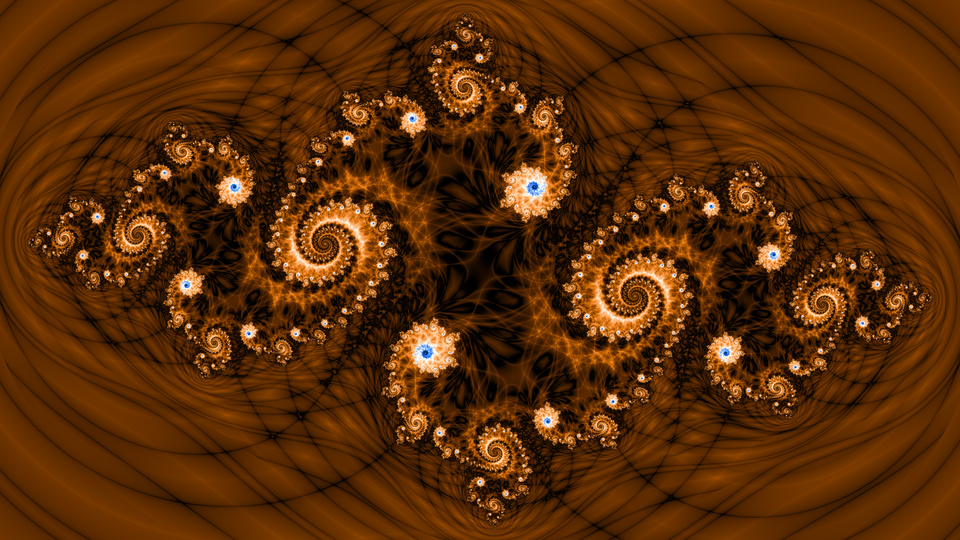

Wiring Of Reality

A common pattern that I follow is to see what other people have done so far in a domain, copy, experiment (since I don't know what exactly I'm looking for) and change that until it becomes something new.

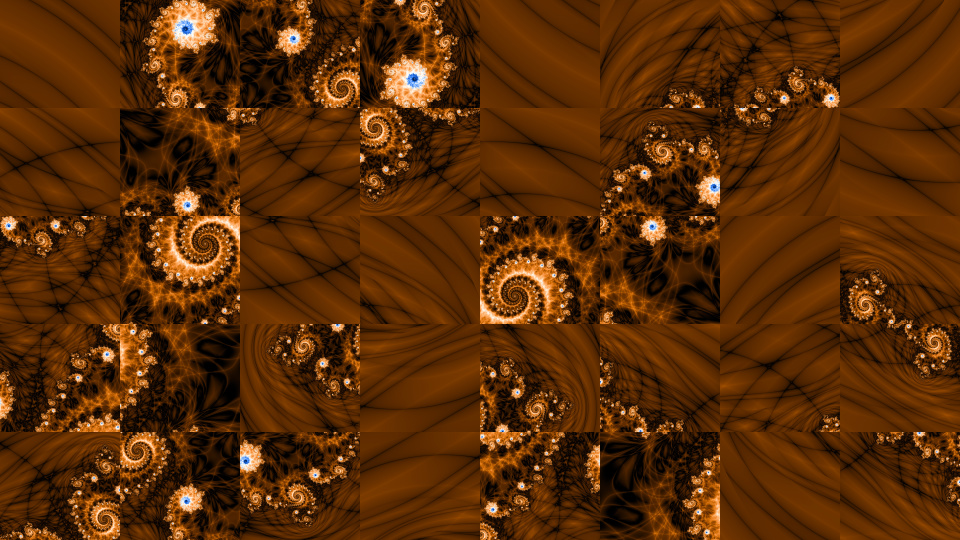

In the case of the "Wiring of Reality" genart, I was looking for equations that create smooth coloring, instead of the color bands that fragment the esthetics of iteration-based fractals.

During experimentation, I've found an interesting flaw in the algorithm: the color bands were accentuated because of a wrong sign. It was nice, but ultimately not interesting enough to investigate further.

Then, I've found new equations that seemed to smoothen the colors a bit better than any equation that other people recommended. Once that problem was solved, I decided to give another try to the pattern that accentuated the color bands, using variations of my new equations, combined with another mistake I've made, as I've later realized.

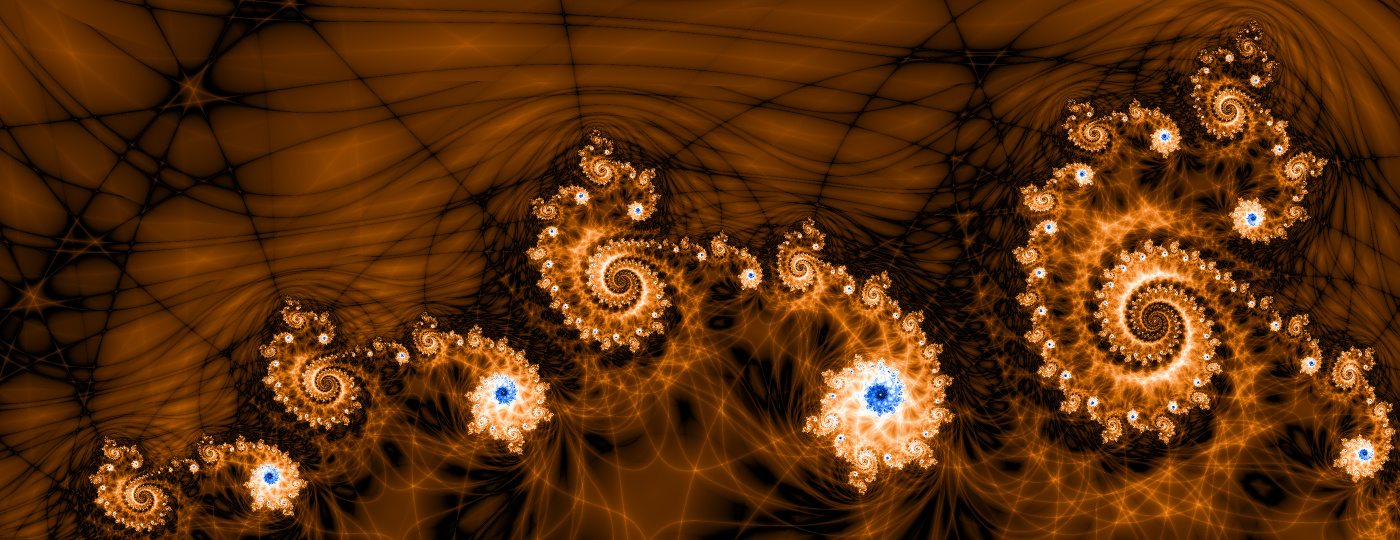

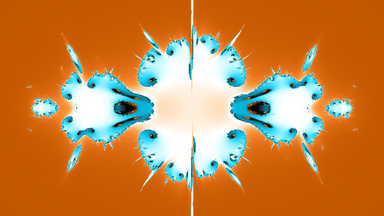

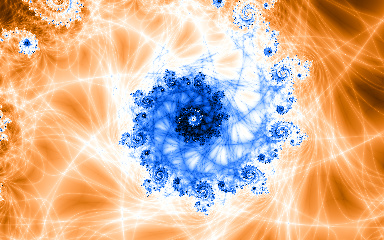

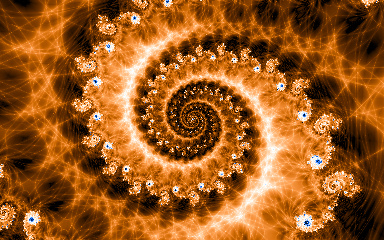

And that's when I was left in awe: the color bands vanished and the wiring appeared, while the smoothness was maintained. Some structures are so spectacular and familiar that the mind can't decide whether they are pure abstractions or they must be some part of Reality.

Opportunities - Chocolate Eclipse, 2021

Click image for

animation

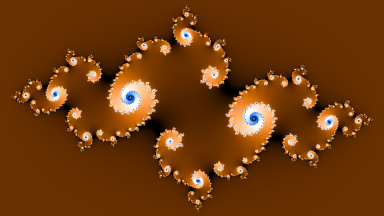

The source Julia fractal

Further experimentation showed that it's possible to have color bands that exhibit an artistic smoothness that makes them look like pieces of architecture, and other spectacular effects.

Magic Ingredient

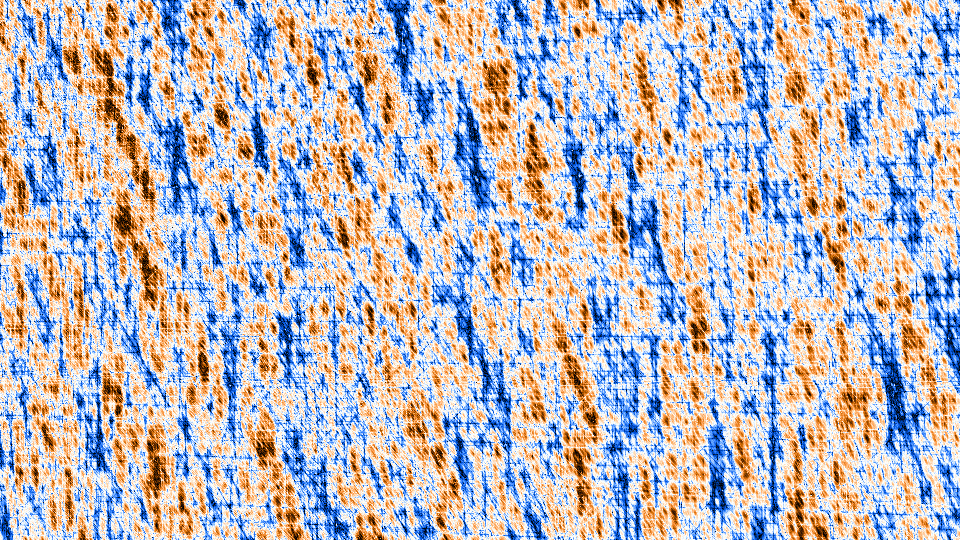

If you're mathematically inclined, here is the magic ingredient that makes the wiring.

The MDN escaper equation that terminates the loop of the pixel generation of the fractal is the usual "radius above an escape threshold". However, the pixel color isn't generated from the number of iterations until escape, but from an accumulator that is computed in the loop.

The accumulator is part of the MDN reducer equation, the equation that reduces the generated MDN codomain to a scalar. The accumulator is somewhat similar to other ones that are already used in fractals to avoid color banding:

a += 1 - (ev - evp).LoopLnA( s1 ) / et.LoopLnA( s1 - 1 )

Legend: "a" is the accumulator (starts at 0), "ev" is the escape value (not the escape threshold, but the actual escape value) of the pixel, "evp" is the previous-iteration escape value (so it's not actually escaped), "et" is the escape threshold of the fractal (for example 2). You can this equation implemented in the source code of Fluogen, in the "WiringOfReality" file.

But the actual magic happens in "LoopLnA", something I've never seen elsewhere. This is an iterated natural logarithm of the absolute value that is in front of it. "s1" is a constant, the number of iterations of the logarithm (for example 5).

Changing the number of iterations doesn't produce a linear response, that is, it doesn't simply increase the amount of wires. It initially does that to some extent, but after 5 it drops significantly, and then starts to vary between signal and noise, sometimes chaotic noise and sometimes uniform noise in which familiar shapes can be clearly seen. However, if you zoom into the noise, you can see the crisscross made by a lot of wires. At 55 iterations, you have to zoom in 10^12 times to see some pattern in the noise.

The Plan

Some images and animations are freely available for download, in medium resolution. The full content, with the highest quality, is available for purchase through NFTs. Prints and digital downloads may be available for purchase. Options:

Digital downloads of the public content of the NFTs, including from the gallery. Characteristics: 2K resolution images (with Author's signature), 2K resolution animations (with Author's signature), free, not resalable, no scarcity.

Digital downloads. Characteristics: 8K resolution images, lost cost, not resalable, no scarcity.

Prints. Characteristics: 16K resolution images, cost based on physical size, resalable, no scarcity.

NFTs. Characteristics: 16K resolution images, 8K resolution animations, resalable, scarcity.

Custom genart request. Characteristics: on request.

All genart is meant to be viewed as wallpapers on TVs or computer displays, printed and mounted on walls, or for further processing.

A Glimpse Into Future

The first lore NFTs to be published were The Nifties, a collection of six unique microstories about famous people who are involved in the NFT and cryptocurrency space, personalized with details from their lives. You can read the text here.

Some genart to pay attention to:

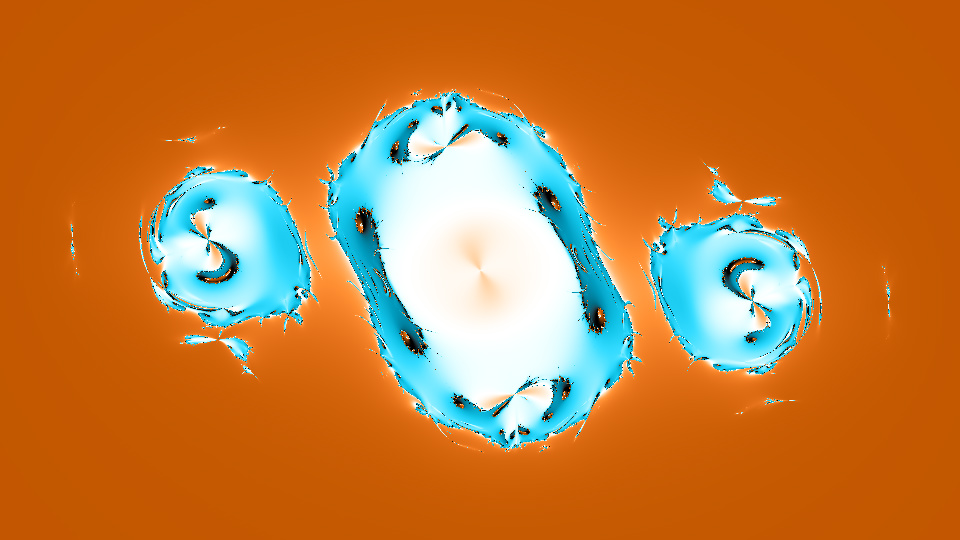

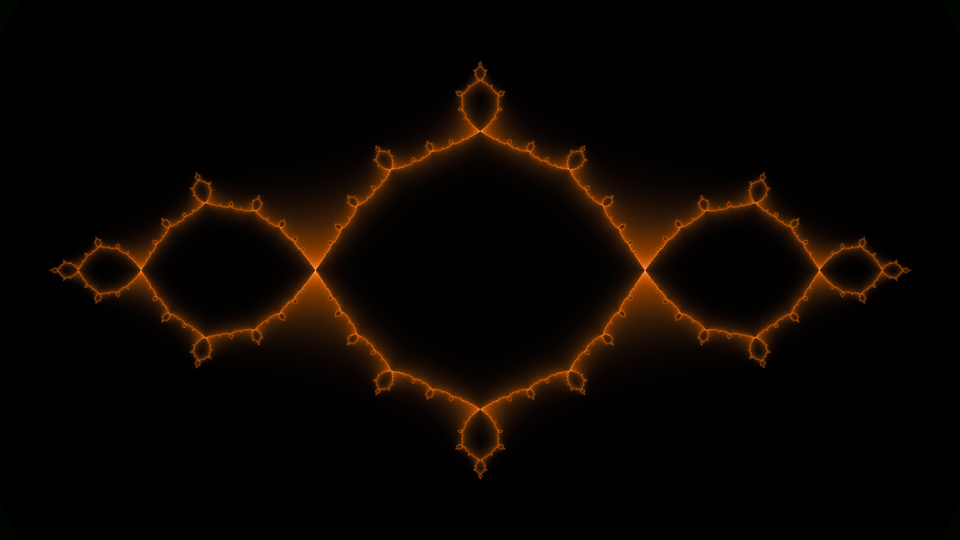

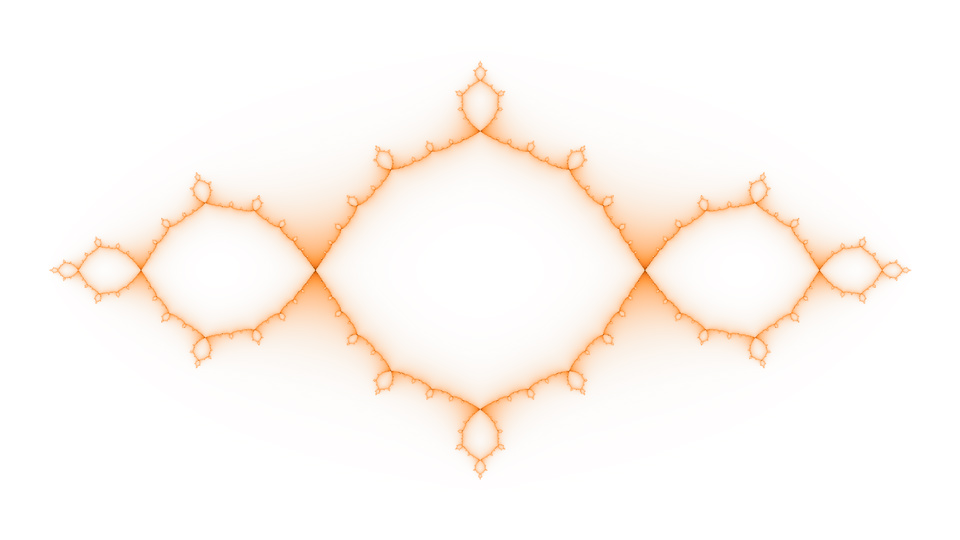

117 Billion (set): The genart that started the artistic work. One of the first genart rendered, sometimes in the early 1990s (probably 1993). The name and the shape symbolize the number of people who are estimated to have ever been born (external source). The stylized female reproductive system is the unexpected result of a simple equation, it's not manually designed. The generating equation is three-dimensional, but isn't related to the multidimensional mathematics discovered by the Author.

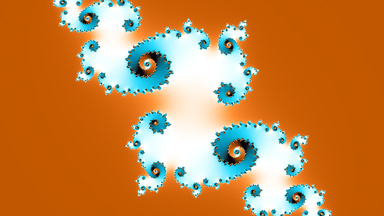

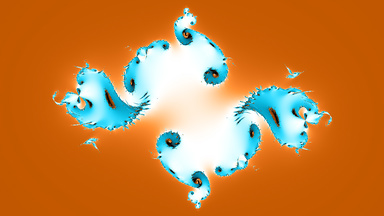

Wiring Of Reality (set): A mesmerizing visual style that was never seen before in fractals. Some genart animations made with this style, "Opportunities" and "Dragon Fire", are variants of Breakthrough, the animation that made me push things further.

Opportunities - Chocolate Eclipse: The first published genart with the "Wiring Of Reality" visual style, both as image and animation. The animation is 200 seconds long and has 6'000 frames with a total size of 550 GB. Its private content has a staggering 20 GB. The frames were generated in about 26 hours, while encoding them in video files took about 22 hours. The XQ animation took 16 hours to encode and its size is 14 GB, while the normal 8K animation took 3 hours. This is one of the quickest animations to generate. Because this animation is symmetric, so goes one direction and then back, the generated frames are only half of the specified total, while the rest are copied, but all of them have to be video-encoded because the encoder can't handle this symmetry. The private contents of these image and animation NFTs are available for everyone here.

Dragonfly Sonata (set): An extravaganza of shapes and colors.

Eternal Firefly (set): A variant of "Dragonfly Sonata".

Butterfly Symphony (set): Closely related to "Dragonfly Sonata", but emphasizing the silky, delicate patterns exhibited by the pixels that didn't escape from the fractal threshold.

Eight (set): If number 8 is good luck, here's math making it 8 times. The generating equation is three-dimensional, but isn't related to the multidimensional mathematics discovered by the Author.

Circle Of Life: Two butterflies, one within the other.

NFT Content

Multiple resolutions are available in an NFT, up to 16K for images, and up to 8K for animations.

All public content is usually published in 2K resolution images (with Author's signature), 2K resolution animations (with Author's signature).

The aspect ratio is almost exclusively 16:9, to exactly fill TV displays, but sometimes it may be square. Any aspect ratio can be requested through a custom genart request.

All PNG images are saved with 48-bit colors, with no loss of quality. All JPG images are saved with 24-bit colors, with some loss of quality.

The private content of an image NFT contains several image files:

"Master - [Genart name].png / jpg" = The master genart in 16K resolution.

"Image [N]K - [Genart name].png / jpg" = The genart in [N]K resolution. "N" can be 2, 4, 8.

"Image NCP 8K - [Genart name].jpg" = The genart in 8K resolution, saved without an embedded color profile. This may be useful when you need to display / print the genart through a flow that is only partially color managed.

"image_cover_[Genart name].png / jpg" = The genart in 2K resolution, with the Author's signature on it.

"image_cover_srgb_[Genart name].jpg" = The genart in 2K resolution, in the sRGB color space.

"image_gallery_[Genart name].jpg" = The genart in 1K resolution.

"image_thumbnail_[Genart name].jpg" = The genart's thumbnail (384 pixels wide).

"Mosaic 01 - [Genart name].png / jpg" = Mosaic of the master genart in 16K resolution.

"Mosaic [N] - [Genart name].jpg" = Mosaics of the master genart in 8K resolution.

"Mosaic NCP 8K - [Genart name].jpg" = Mosaic of the genart in 8K resolution, saved without an embedded color profile. This may be useful when you need to display / print the mosaic through a flow that is only partially color managed.

"mosaic_cover_[Genart name].png / jpg" = Mosaic of the genart in 2K resolution, with the Author's signature on it.

"mosaic_gallery_[Genart name].jpg" = Mosaic of the genart in 1K resolution.

"MOCs" = Folder with JSON files with the configurations of the mosaic tiles that were used to create the mosaics.

"print_argb_[N]k_[Genart name].png / jpg" = The genart in [N]K resolution, ready for printing. "N" can be 8, 16. The colors of these images are converted to a color profile that most printing services recognize, AdobeRGB, which is also embedded in the images. The JPG image is available because most printing services accept only very small files and only 8-bit colors, and the JPG fits these limitations; the disadvantage is that it may show some color banding.

"Extra" = Folder with extra content, like the license terms and the puzzle solver. You can open (in a browser) the "\puzzlesolver\puzzlesolver.htm" file in order to solve puzzles.

Mosaics aren't included in image NFTs if they look bad, as they would only take up space.

The visual quality of animations is limited due to the reduced video decoding power of TVs, but is acceptable when viewed from a distance larger than a TV diagonal. Because of this, an animation of exceptional quality, XQ, is included, but it can't be played by TVs.

The private content of a genart animation NFT contains animations in 1K, 2K, 4K and 8K resolution, 30-bit colors, 30 frames per second, encoded with the AV1 codec, in an MP4 container. The XQ animation is encoded with the VP9 codec.

The private content of an animation NFT contains several image and video files:

"animation_poster_[Genart name].jpg" = A relevant frame of the animation, in 1K resolution.

"animation_cover_[Genart name].mp4 " = The genart in 2K resolution, with the Author's signature on it.

"animation_gallery_[Genart name].mp4 " = The genart in 1K resolution, with the Author's signature on it.

"animation_social_[Genart name].mp4 " = The genart in 1K resolution, with the Author's signature on it. This animation may flow / play much faster than the other animations from the NFT because it's intended for social media (where people don't have the patience to wait for slow animations).

"[Genart name] - [N]K.mp4" = The genart in [N]K resolution. "N" can be 4, 8.

"[Genart name] - XQ.mp4" = The genart in 8K resolution, exceptional quality. The individual frames look almost indistinguishable from the original PNG frames, so for all practical purposes the animation looks lossless. Not playable on TVs, but can be played on a computer and passed to a TV through an HDMI cable.

"Highlights - [Genart name]" = Folder with highlight frames in 8K resolution, saved in PNG format. They are usually saved one every 5 seconds. You may want to use the JPG images from the "Highlights - JPG" folder because the Windows desktop slideshow doesn't support large PNG files, and TVs don't support large resolution PNGs.

"Highlights - JPG - [Genart name]" = Same as "Highlights - [Genart name]" but the frames are saved in JPG format. These images don't have an embedded color profile because the Windows desktop wallpaper doesn't handle it properly, and TVs ignore it.

"Extra" = Folder with extra content, like the license terms.

Slow animations are meant to be used in environments where they can create a relaxed background atmosphere.

The private content of a lore NFT contains images with multiple color palettes, in 16K resolution, that can be printed as a huge, high quality poster.

Resolution and Quality

What's the benefit of a 16K image? See for yourself the difference between what you would see on a normal 2K computer display with a (let's say) 27 inch diagonal, and 16K display with a 216 inch diagonal (so 8 times larger):

2K (resized from 16K)

|

16K center crop

|

|

16K top-left crop

|

|

Ironically, as awe inspiring as it is to see a 16K image, it only makes you hungrier for a higher resolution that lets you see deeper. But fractals never end, so it literally is a chase toward infinity.

If larger prints are required, it's possible to generate several 16K images and combine them during printing.

Loading a high resolution image in an image viewer may take even tens of seconds if the computer isn't powerful enough, so be patient.

The genart can technically be rendered with phenomenal quality, with 48-bit colors, lossless compression and any frame rate for animations. However, the requirements for online storage space, download bandwidth, and resources for image generation and viewing, impose limitations. The maximum tested resolution was 1 gigapixel, the equivalent of 32'000 * 32'000 pixels; to generate this, the computer must have at least 32 GB of RAM. The maximum tested width was 65'000 pixels. At these sizes, average computers and some image viewers fail to display the images.

For an average computer with 8 GB of RAM, a usable image resolution is 16K, the equivalent of 133 megapixels, or 64 HD images, or 8x physical zoom. This kind of resolution is useful only when the images are printed in a very large format, cropped significantly, or viewed on 8K TVs.

Trivia:

A 16K genart image can take from minutes to hours to generate, on an average desktop computer.

An 8K animation can take from hours to thousands of hours to generate.

To view 8K animations, a powerful computer, or a device certified for 8K movies, is required.

Animations normally loop seamlessly, that is, they can be played on repeat without any visual interruption between the end and the (new) beginning, if the video player supports perfect looping (MPC-HC and VLC do, web-browsers usually do).

Custom Genart Requests

Any genart that wasn't yet published as print or NFT can be purchased through a custom request. Visit the Gallery for the available genart.

Anyone can request the Author to create custom genart and publish it as print or NFT.

Suggestion are free to make, but no fulfillment timeframe is provided.

The price of a print is that of a normal print, but the genart has no scarcity.

A custom NFT may have the "Patron" or "Exclusive" license type.

The custom genart must have a visual style that is markedly different than the genart of any NFT. Genart scarcity (and what "markedly different" means) is clarified here.

The buyer pays only at the end.

A custom request that is free to make is to change the license type of an existing NFT that wasn't yet sold by the Author. A new NFT is made with the new license type, and once it's purchased, the replaced NFT is burned.

A custom genart has to be generated for each custom request, regardless of what has to change from an existing genart. This is particularly important for animations, due to the very long generation time.

Before purchase, multiple samples can be provided in 1K resolution (= 960 pixel width); this is the resolution of the images from the Gallery. Larger physical sizes and larger resolutions look much better.

Collectors who have already purchased an NFT (with a license type other than "Supporters") have two benefits:

Can request samples in 2K resolution, at least for images.

Can request a genart whose generation time is much longer than the generation time of a basic animation like Opportunities - Chocolate Eclipse.

The buyer can specify a text to be put in the "Autograph" metadata of the custom NFT. The text must be in English, must be decent, and is capped at around 500 characters. Note that autographs may hinder sales in the secondary market.

If the buyer specifies the equations or configuration from which to generate the genart, the buyer can specify a text to be put in the "Author" metadata of the custom NFT. The text must be in English, must be decent, and is capped at around 50 characters.

Examples of requests:

Offset colors. If the NFT is an animation, the colors can cycle.

Another color palette. If the palette is provided by the buyer, it should be a gradient palette, using either HSL or RGB colors; the interpolation will be monotone cubic, using the Jab (CIECAM) colorspace.

A deep zoom into (or out of) an existing genart. The zoom factor has to be at least 4.

A frame of an existing animation.

Set the genart configuration based on the buyer's wallet address, or the Autograph metadata.

Split a piece of genart in multiple high resolution tiles, for outlandish architectural design projects that want to showcase the genart, especially a mosaic, by printing it on various materials like metal, acrylic glass, wood or porcelain tile, and installing it on a wall or floor.

Some requests require swapping an existing NFT for the custom one (in order to preserve the scarcity of the genart). Examples:

A different aspect ratio.

A higher resolution. If an animation is supposed to have twice the width, the time required to generate it will be four times higher.

A different animation speed. If an animation is supposed to be viewed twice as slow, then twice as many frames have to be generated.

Can a custom animation have a soundtrack? Technically, yes, by licensing songs, but licensing existing songs for NFTs is basically impossible. Also, for an exceptional match between an animation and a song, the animation has to be choreographed for that song, that is, its tempo has to be variable, and various effects have to be introduced during beats. A lot of work has to be put into modifying the generating software to be able to do this, and a lot of work has to be put into choreographing the animation.

Viewing the Genart

All genart is meant to be viewed as wallpapers on TVs or computer displays, printed and mounted on walls, or for further processing.

Multiple resolutions are available, from 1K to 8K for animations and up to 16K for images.

The genart comes as computer files, so you can put them on a USB drive and connect that drive to a computer or TV.

The work to ensure that the PNG images of the genart contain accurate colors was tremendous. The colors were checked against multiple image viewers, video players, web-browsers and web articles, in various lighting conditions (which affect the way colors are perceived).

Displaying accurate colors, at the level of an art gallery, on TVs and computer displays is a dream, in the year 2022. Most software applications implement color management partially or wrongly, and this results in inaccurate colors shown to the viewer. The pipeline of displaying colors starts accurately with the genart, but is then affected by image viewers, video encoders, video players, web-browsers, processing power limitations, hidden workarounds, and even hardware limitations (like 24-bit displays). Each of these factors works against color accuracy and high quality details.

The biggest problem in displaying accurate colors is that some image viewers can't accurately display JPGs with an RGB pixel format (they need YCbCr). Video encoding is even harder to do accurately because most video players can't properly play video encoded with an RGB pixel format (they need YUV420) or with a full color range (they need it to be TV limited). This happens despite the fact that they are software running on computers, and computer displays work only with an RGB pixel format, full color range. Even worse, for the same output size, non-RGB encodings give visibly worse results (at least in fine gradient areas). But, in the end, it worked out well enough.

Viewing tests have been mostly performed with the Opportunities - Chocolate Eclipse genart (images and animations) because, for some reason, its reddish brown colors are very challenging to reproduce accurately, by both software and hardware. Other colors, from other genart, show very little to none of the mentioned color issues.

Viewing on Computers

All genart is created on a display with a wide color gamut (similar to that of Display-P3), with gamma 2.2, illuminant D65, and a measured brightness of 100 cd/m2. If you need the ICC profile of the display, you can download it from here.

The correct way to view the colors is in similar conditions, which makes the genart look exceptional, but, ultimately, the way to view them is the way each person likes it.

The best way to view the genart is on a display / printer that is hardware calibrated to the Display-P3 colorspace, and with software color management disabled (in the operating system, image viewer and video player). Apple uses by default the Display-P3 colorspace throughout their ecosystem.

If your display has a narrow color gamut, like sRGB, and your image viewer doesn't support color management, you will see the images with washed out colors; colors like red, brown, green are particularly affected.

The sensitivity of the eye to red decreases in the evening, so red hues will appear slightly shifted toward yellow and green. The lighting conditions contribute to this, as artificial light is usually more yellow than sunlight.

For best image quality, use the original PNG files, which support 48-bit colors.

On Windows, "Windows Photos" appears to be the most accurate at color management, and best at converting the stored 48-bit colors to the standard 24-bit colors that most displays show, with slightly less color banding (probably better rounding).

If you need video quality at the level of an art gallery, the video rendering of MPC-HC (Media Player Classic - Home Cinema) is superior to that of VLC (and of web-browsers), and the XQ animations look spectacular. You can especially see the difference if you save snapshots with both of them: the snapshots saved with MPC-HC show smooth colors, while those saved with VLC show slight color banding.

For animations, the web-browsers based on Chromium (Brave, Chrome, Edge) show quite prominent color banding, but the colors are accurate enough. Firefox doesn't show color banding, but the colors are shifted toward red.

If you want to display the genart as a computer wallpapers, take the following into consideration:

Windows allows users to directly use PNG files as wallpapers, so use that feature.

Windows won't let you use a PNG file whose size is above 25 MB. A 4K PNG genart can easily exceed this limit. In such cases, use the provided JPG file or the 2K PNG.

An active "night light" mode (which massively shifts the colors toward red) may cause color banding.

Color management advice:

The best way to view the genart is on a display / printer that is hardware calibrated to the Display-P3 colorspace, and with software color management disabled (in the operating system, image viewer and video player). Software color management produces accurate colors, but may also show artifacts, like color banding.

If you can't do the above, it would be great if you could install (in your operating system) the color profile obtained from the display's manufacturer (for your display model). In the ideal case, the display should be color profiled (with a colorimeter or spectrophotometer).

All genart images have embedded in them the color profile of the display on which they were created. This lets image viewers adjust the colors so that you can see the intended colors, as closely as possible.

All genart animations are marked as intended for the Display-P3 colorspace, which is very close to the gamut of the display on which they were created. However, color management in video players is either missing or wrong.

For more accurate colors, you should activate color management in the configuration of your image viewer. "Windows Photos" and "Windows Photo Viewer" activate it automatically.

Here are some image viewers whose color management was tested, on Windows: "Windows Photos", "Windows Photo Viewer", XnViewMp, IrfanView, FastStone. The used reference was LittleCMS. There are various scenarios when the image viewers show wrong colors, but FastStone was consistently off.

The Windows desktop wallpaper isn't color managed. It uses the color profile embedded in images, but treats them as if they are made for sRGB colorspace, which makes them appear to have overly saturated colors on wide-gamut displays. This is why on a wide-gamut display you should either use the files whose names contain "NCP", or the PNG files. A more general alternative is to set the wallpaper with a software which ignores the color profile (like IrfanView).

Just because a software application is color managed and accurate doesn't mean that it can display accurate colors because the video encoders don't necessarily put accurate colors in the animations. This becomes bad when the color management can't be disabled, but even when it can be, the result can still be bad because the video encoders shift the colors before they write them in animations. Unfortunately, that's the only encoding mode that most software applications can display.

MPC-HC has user-activated color management, but it's wrong. External download.

VLC isn't color managed. This is good on a wide-gamut display, but unfortunately it shows severe color banding in low detail areas (much less so in the XQ animations). External download.

Firefox is color managed and accurate for images. Videos are slightly redder than they should be, but this appears to be correct because they are marked for the Display-P3 colorspace that's slightly redder (than the display on which they were viewed when created). Firefox can't even open the XQ animations.

The web-browsers based on Chromium (Brave, Chrome, Edge) have perceptibly off color management (at least on wide-gamut displays). They show image colors slightly redder than they should be. Videos are slightly more yellow than they should be.

Some image viewers support color management only in part. In such cases, you may be able to avoid bad looking colors by using the genart images whose names contain "NCP" or those that are saved as PNG files.

Some genart may show slight artifacts like color banding, and even slight hue variation, in the mid-lightness areas. This is due to several factors:

Software color management produces more accurate colors, but may cause displays and printers to imperfectly display color gradients (even gray gradients may exhibit tiny hue shifts). The best way to view the genart is on a display / printer that is hardware calibrated to the Display-P3 colorspace, and with software color management disabled (in the operating system, image viewer and video player).

An active "night light" mode (which massively shifts the colors toward red) may cause severe color banding.

Color gradients with a fixed hue and a variable lightness, like those used in genart, are imperfectly converted to RGB by known formulas.

Some fractals use a coloring process that may intrinsically exhibit color banding.

Some genart pieces contain extremely fine color gradients whose pixel-to-pixel RGB channel variation requires at least 12 bits to store. The conversion of such variations to the usual 8 bits per channel can result in visible interruptions in gradients.

JPEG compression adds artifacts because it doesn't preserve all the information from the genart.

Some image viewers that let you set the computer wallpaper, convert the PNG genart to BMP or JPEG, conversion which may cause color banding.

Most computer displays and printers can reproduce only 24-bit colors (8 bits per RGB channel) which may intrinsically exhibit banding in fine color gradients.

The genart from the private content is saved with 48-bit colors, so, a professional display or printer, together with the appropriate software, that can reproduce at least 30-bit colors should show no color banding, or at least reduce it significantly.

Viewing on TVs

The genart comes as computer files, so you can put them on a USB drive and connect that drive to a TV. If the files are archived in a ZIP file, they must be extracted from the archive before you put them on the USB drive. The XQ animations can't be played by current TVs, but can be played on a computer and passed to a TV through an HDMI cable.

The genart is tested on a 65 inch 8K Neo QLED TV, generation 2022. The TV has a wide color gamut (wider than that of the Display-P3 colorspace).

Due to the extremely complicated, yet inaccurate (color gamut), automatic configuration of TVs, it's recommended to manually change the configuration until you like the image that you see, as follows:

Set the picture mode to "Dynamic".

Check that the colorspace was set to "Native", in order to benefit from all the colors that the TV can show. If it wasn't, try to change it manually.

Decrease the saturation in order to keep the colors pleasant. For the test TV, this is 70%.

Decrease the brightness until you like what you see. For the test TV, this is 60%.

Set the color tone to "Standard" (not "Cool", not "Warm").

Change the white balance until the image has no color cast (like bluish or greenish). For the test TV, the red gain is +5, while the blue gain is -15.

Buying an 8K TV makes sense only in certain scenarios, like displaying art and viewing it from up close. The resolution difference between 8K and upscaled 4K can barely be seen from up close (90 cm, 3 ft) on a 65 inch TV, while switching back and forth between the two images. If you buy an 8K TV to display art that will be viewed from up close, its diagonal has to be at least 65 inches.

Art is comfortable to be viewed from a distance equal with at least one diagonal of the TV, which means that the 4K resolution is enough for any TV size. At such a distance, even the 2K resolution images look good enough.

If you want to buy a TV to display art, like for an art gallery, buy an OLED TV because of its consistency of colors regardless of the viewing angle, its darker shadows and its richer colors.

If you want to display the genart on a non-OLED display, test how the samples from the Gallery look like. Look at the genart with the media viewer, not from a web-browser.

For the most part, the image quality of OLED and QLED is the same, but in some edge cases non-OLED displays look like the cheapest LED displays. These edge cases occur, for example, when the images have large areas of a color, which is the case for genart.

For example, the reddish brown from the Opportunities - Chocolate Eclipse genart is so badly displayed on the test TV that its perceived hue varies (across the display) between reddish brown and light ocher, depending on the viewing angle, with the slightest change in the viewing position having a huge effect on color perception. The colors of this genart appear to be an edge case scenario.

Due to the potential of image retention, an OLED display should change the image from time to time.

The visual quality of animations is limited due to the reduced video decoding power of TVs, but is acceptable when viewed from a distance larger than a TV diagonal. Because of this, an animation of exceptional quality, XQ, is included, but it can't be played by TVs.

Summary of the behavior of the test TV, which is likely widespread among TVs:

Supports 30-bit colors, yet the PNGs saved with 48-bit colors and those saved with 24-bit colors look identical, which means that the TV isn't using 30-bit colors to show images. Moreover, some PNGs show more color banding than a computer display which supports only 24-bit colors.

Can't display very high resolution PNG files, not even 8K. To avoid this issue, use the lower resolution PNG files, or the JPG files. Note that a 4K resolution PNG image that's upscaled by the TV to 8K may look better than an 8K JPG (which may show color banding), so test both.

The pixels look visibly worse than the pixels of the display on which the genart was created. For example, for the same 8K image, viewing the images at pixel level (= 1 logical pixel per 1 physical pixel), fine lines that appear smooth on the display, appear jagged on the TV. This issue is visible only when the viewing distance is smaller than the TV's diagonal. The pixel density (per millimeter) is 5.4 for the TV, and 3.5 for the display, so the larger pixels of the display should have had the opposite effect.

Image color management:

In the default colorspace ("Auto"), the colors of animations are very close to those shown by the display on which the genart was created. However, for images, the colors are less saturated.

After the TV was calibrated with a smartphone, the colors lost yellow saturation.

The color profile that's embedded in images is ignored, but this isn't critical because the TV's gamut is close to the gamut of the display on which the genart was created.

The colors of PNGs and JPGs with a YCbCr pixel format look fine; this is how all genart is stored. The colors of JPGs with an RGB pixel format are shown with colors that are darker, shifted and with a lot more visible color banding.

It's possible to switch to the "Native" colorspace in order to benefit from the full gamut of the TV, but the saturation must be decreased in order to keep the colors pleasant.

Has no "Fill (landscape images)" display mode for images / animations, to fill the entire display with a displayed image. This matters, for example, for images with a 16:10 aspect ratio that have a width that exactly fits the TV's resolution; instead, they will be badly scaled to fit the display. Similar for images with a 16:8 aspect ratio that have a height that exactly fits the TV's resolution.

During scaling, shows severe artifacts, including increased color banding, especially during upscaling, especially if the zoom factor isn't an integer. Scaling from 4K to 8K looks good.

It's able to decode videos with a bitrate that is higher than what the specifications say. For example, while the TV is rated to play 8K videos with a maximum bitrate of 80 Mbps, it can play smoothly the Opportunities - Chocolate Eclipse animation with an average bitrate of 90 Mpbs, even though the animation has areas where the bitrate goes well above the average, to maintain the visual quality. However, when the average bitrate is 140 Mbps, the TV freezes in certain areas of the animation. This is unfortunate because the animation with the 90 Mbps bitrate has only an acceptable quality, whereas the animation with the 140 Mbps bitrate has a pretty good quality. For comparison, the XQ animation has a bitrate of 570 Mbps, while the maximum exceeds 1'100 Mbps.

Can't seamlessly loop videos. There is an interruption between consecutive loops.